(In this SmartBits, Anuj Magazine outlines “ On Career Growth “. The video is at the end of this blog)

Career growth can be divided into two buckets. One is the nonlinearity aspect of the career, and the other uni-dimensionality. One of the things found common in most reasonably sized organisation is that each and every organization has career paths and the way the career paths tend to get designed are that there is an entry role that one gets into post college and then there is a role at the top.

The role which is essentially at the top of these ladders are of a VP or a Department Head. Looking at these career paths, the highest designation in the organization is that of a CEO, if we associate these two logically, we will tend to think why organizations do not give a path till the CEO role in the organisation. This led to the question on the linear approach of following the career paths, that are designed in the organisation.

Mark Templeton CEO of Citrix for around 20 years and quite respected in his field said that career paths up to the top in the organisation rarely tend to be linear, they always zigzag. One needs to figure out where the next dot is, to move forward. This questions the rationale behind the linear careers. There is nothing wrong having a predictable career path. It does help to solve a problem in the organisation. For instance HR wants predictable processes, even employees want them too, and there is nothing wrong with that, as not everyone wants to be a CEO. But there are other merits to following a nonlinear path.

The second part is on the uni dimensionality, let us take example of startups, .When the startup is new and the product market fit is not achieved, people play different roles being in one designation such as marketing, coding, testing or they may be hustling around and doing sales. In early stage organizations, one can afford to be a specialist in the interest of moving the organisation forward, but when it comes to scale, the uni- dimensionality, the specialisation matter, of having a deep knowledge of one subject or maybe a related set of technology help scales the organisation and go to the next level.

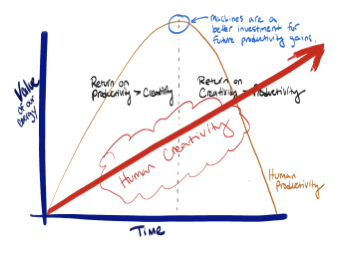

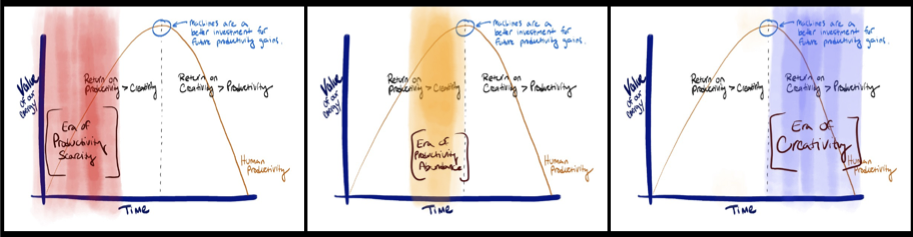

Should I be a generalist or a specialist? If you want to be a specialist, choose a field that is going to be relevant in the time to come.The people who chose artificial intelligence and machine learning fifteen years back are reaping the rewards of that. In IPL we see around 200 odd cricketers from India in that competition, which is hardly around two to three percent of the cricket playing population or even less and these are like hyper specialised individuals who specialise in their areas.For choosing a specialised field it is better to have the conviction to be in the top ten or twenty percent so that one can reap the rewards in the time to come.

Generalists are people who are more adaptable, who can learn a new skill in a shorter time and deliver value and it is more akin to the gig economy. Pick up the rules for some time and then move on to something else. There is nothing wrong in both of them. Both have its merits and demerits. Hyper specialisation is going to be the thing in the future.

The QA profession has been under pressure from external forces, as decision makers in organization want to see more value. It comes to more of an economics decision, that we always called as cost of quality, we never use the term profit of quality. We need people who can represent QA in a boardroom where value can be showcased, and that is lacking at the moment.