T Ashok @ash_thiru on Twitter

Summary

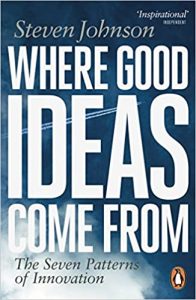

As software test practitioners we revel in finding bugs, and as managers and engineers we are focused on fixing these. Steven Johnson in his book “Where good ideas come from-The seven patterns of innovation” devotes a chapter on errors as a source of innovation. This article summarises key ideas from this outlining how erroneous hunch changes history, how contamination is useful, how being wrong forces you to explore, how paradigm shifts with anomalies and how error transform into insight.

Brilliant software testing is interesting, kinda like Star Trek, going into the unknown. It requires discipline, creativity, logic, exploration skills, note taking, observation, association, hypothesising, proving and juggling multiple hats frequently. It is not a mundane act of finding bugs and getting them fixed. It is just about scripting and running it to death. It is about exploring the unknown, driving along buggy paths, discovering new ideas, suggesting improvements, enhancing experience, embedding testability and having the joy of creating beautiful software by looking at the interesting messiness.

When I read the book “Where good ideas come from – The Seven Patterns of Innovation” by Seven Johnson, I was delighted to know that one of the patterns was “ERRORS”. An entire chapter on this was a treat to read. As a test practitioner I have always meandered along the paths of anomalies, understood implementation and intentions deeply, and have come up with interesting suggestions to improve and enhance experience. Errors have been my best partner in perfecting what I do and coming up with interesting ideas.

In this article I have summarised key facets from this chapter “ERRORS” outlining how erroneous hunch changes history, how contamination is useful, how being wrong forces you to explore, how paradigm shifts begins with anomalies in the data, how paradigm shifts with anomalies and how error transform into insight.

An erroneous hunch changed history

The strange correlation between the spark gap transmitter and the gas flame burner turned out to have nothing to do with the electromagnetic spectrum. The flame was responding to the ordinary sound waves emitted by spark gap transmitter. But because De Forest had begun his erroneous notion that the gas flame was detecting electric signals, all his iterations of Audion involved some low pressure gas inside the device that severely limited its reliability. It took a decade for researchers at GE to realise that triode performed best in vacuum, hence the name vacuum tube. The vacuum tube, the precursor to the stunning electronics that would sweep the world n in the next few decades was born from an error. De Forest watching the gas flame shift from red to white when he triggered a surge of voltage through a spark gap forming a hunch that gas could be employed as a wireless detector could be more sensitive that anything than anything Marconi or Tesla had created to date. An erroneous hunch changed history.

Contamination is useful

Alexander Fleming discovered the medicinal virtues of penicillin when the mould accidentally infiltrated a culture of Staphylococcus left by an open window in his lab. Antibiotic was born. A bunch of iodised silver plates left in a cabinet packed with chemicals by Louis Daguerre formed a perfect image when the fumes spilled from a jar of mercury. Photography was born. Greatbatch grabbed the wrong resistor from the box while building a oscillator and found it was pulsing a familiar rhythm. Pacemaker was born. Contamination is useful.

Being right keeps you in place. Being wrong forces you to explore.

The errors of great mind exceed in number those of the less vigorous one. Error often creates a path that leads you out of comfortable assumptions. DeForest was wrong about his utility of gas as a detector, but he kept probing at the end of edges of the error, until he hit upon something that was genuinely useful. Being right keeps you in place. Being wrong forces you to explore.

Paradigm shifts with anomalies.

Paradigm shifts begin with anomalies in the data, when scientists find that their predictions keep turning out wrong says Thomas Kuhn in the ‘The structure of scientific revolutions’. Joseph Priestly thought a plant would die when kept in a jar depriving it of oxygen, it turned out to be wrong, discovering plants expel oxygen as part of photosynthesis. Being wrong on its own doesn’t unlock new doors in the adjacent possible, but it does force us to look for them. Paradigm shifts with anomalies.

Transforming error into insight

Anro Penzias and Robert Wilson thought noise in the cosmic radiation was due to faulty equipment until a chance conversation with a nuclear physicist planted the idea that this may not be the result of faulty equipment, but rather the still lingering of reverberation of big bang. It changed the opinion that the telescope was the problem. Coming at the problem from a different perspective with few preconceived ideas about what the correct result could be can enable one to conceptualise scenarios where the mistake might be actually useful. Transforming error into insight.

Noise free environments end up being more sterile

A few decades ago Prof Charles Meneth began investigating the relationship between noise, dissent and creativity in group environments. When his subjects were exposed to inaccurate descriptions in the slides, they became more creative. Deliberating introducing noise forced the subjects to explore more in the adjacent possible enabling good ideas to emerge. Noise free environments end up being more sterile and predictable in their output. The best innovation labs are always a little contaminated.

Error is what made humans possible in the first place.

Without noise, evolution would stagnate, an endless series of perfect copies, incapable of change. But because DNA is susceptible to change – where mutations in the code itself or transcription mistakes during replication – natural selection has a constant source of new possibilities to test. Most of the time, these errors lead to disastrous outcomes, or have no effect whatsoever. But every now and then, a mutation opens up a new wing of adjacent possible. From an evolutionary perspective, it’s not enough to say “to err is human”. Error is what made humans possible in the first place.

Mistakes are not the goal, they are an inevitable step in the path of innovation.

When the going gets tough, life tends to gravitate towards more innovative reproductive strategies, sometimes by introducing more noise into the signal of genetic code, and sometimes by allowing genes to circulate more quickly through the population. Innovative experiments thrive on useful mistakes, and suffers when demands of quality control overwhelm them. Mistakes are not the goal, they are an inevitable step on the path of true innovation.

Truth is uniform and narrow, error is endlessly diversified.

Benjamin Franklin

“Perhaps the history of the errors of mankind, all things considered, is more valuable and interesting than of their discoveries. Truth is uniform and narrow; it constantly exists, and does not seem to require so much an active energy, as a passive aptitude of soul in order to encounter it. But error is endlessly diversified.”